Aaron Carroll has some wise words to keep in mind when interpreting studies of the Medicaid or uninsured populations:

Insurance doesn’t equal care. Insurance can affect how likely you are to get care and how quickly you might get it. But any study that looks at insurance has to adjust for many, many other variables in order to get the true effect of insurance. …

Surgery is different than other types of care (like emergency care) in that it is harder to refuse. So it may be that the uninsured are getting care on a compassionate basis. Few would provide a screening mammogram or yearly colonoscopy to someone uninsured, however, and you would get that with Medicaid.

He also cautions against taking conference abstracts too seriously:

[L]ess than 45%of the research presented [at the 1998 and 1999 Pediatric Academic Society meetings] was published in a peer-reviewed journal in the next four to five years. So over half of what was presented at the meeting never was “really” published.

I’m not saying the results … aren’t valid. I’m saying I can’t tell. And neither can you, without more information. The peer review for a meeting just isn’t the same as for full publication. You have less time, different criteria, and almost nothing by which to judge the work. Ideally, meetings would stop publicizing abstracts as if they were full studies, but neither they, nor the press, seem likely to do so.

So, be wary of conference abstracts. Actually, be wary of peer-reviewed publications too. The strongest conclusions are based on a body of work collected in an unbiased fashion. It’s not uncommon for papers to draw conflicting conclusions. But if an overwhelming majority of papers in an area point in the same direction then it’s reasonable to think they’re on to something. Of course, methodological technique matters. It is possible that scholars are all doing something wrong and only a few recent papers actually get it right. So, it is no easy task to interpret the academic literature.

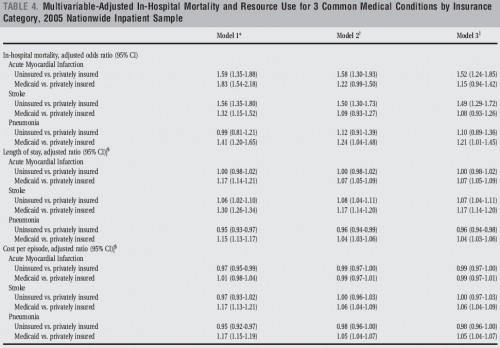

That’s a good segue to this interesting set of results from a recent paper in the Journal of Hospital Medicine, “Insurance status and hospital care for myocardial infarction, stroke, and pneumonia,” by Omar Hasan, E. John Orav, and LeRoi S. Hicks. Click on the following figure to enlarge and study it carefully.

Ignore model 2. Model 1 is adjusted for age group, sex, race, income, emergency admission, and weekend admission and for hospitals’ bed size, control, region, and teaching status. That sounds like a lot of risk adjustment. But model 3 has a lot more. It also adjusts for comorbidities, severity of principal diagnosis, and the proportion of uninsured and Medicaid patients in each hospital.

Now take a close look at the first set of results for acute myocardial infarction. The relative sizes of the odds ratio of uninsured vs privately insured and Medicaid vs. privately insured switch between models 1 and 3. A lot of the other results change quite a bit between the two models too. There are two lessons here. One is that risk adjustment really matters. The other is that we can’t be certain that even model 3 has enough. There may be unobservable characteristics that bias the results.

The only way to fully address the bias due to selection into the Medicaid, uninsured, and privately insured groups is to find a source of exogenous random variation. That could be supplied by a randomized trial or with sound instrumental variables techniques.

Another thing to note is that in the results presented, Medicaid patients have better mortality outcomes than the uninsured for stroke and AMI (model 3). So, from this study alone, one might be tempted to conclude that Medicaid isn’t as bad as some other studies may suggest. But again, should we make a big deal out of this cherry-picked study? No, we certainly should not.