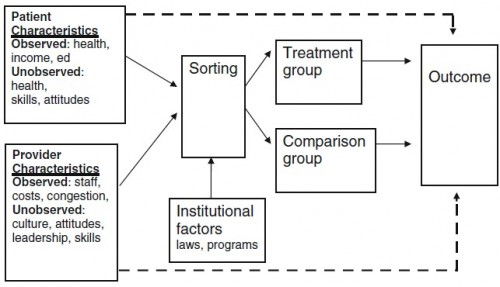

The figure below, taken from a paper by Steve Pizer, is a conceptual model for a generic observational study of some health-related treatment or intervention. The arrows are to be interpreted as causal influences. (I’ll explain why some lines are dashed later.)

Suppose “treatment” is “Medicaid enrollment” and “comparison” is “uninsured.” Since we do not have the luxury of randomly assigning individuals to Medicaid (treatment) or uninsured (control), we must base our studies on populations that self-select into these two groups.

That self selection process is in the “sorting” box in the diagram. Individuals are eligible for and motivated to enroll in Medicaid (or remain uninsured) for many reasons, related to individual factors (like health status, income, etc.) and institutional factors (like Medicaid eligibility rules). Provider characteristics might also play a role if providers are more or less motivated to assist patients in enrolling in Medicaid.

If this were a randomized trial, the sorting into treatment and control would be independent of all patient, provider, and institutional factors. I wouldn’t even put them in the diagram. They’d be irrelevant. But, like I said, Medicaid enrollment or being uninsured is not random. Worse, some of the factors that affect self-selection into Medicaid or uninsured status also affect (health and non-health) outcomes. This is a source of selection bias. For example, if sicker individuals are more motivated to enroll in Medicaid we will find that Medicaid is correlated with worse health outcomes. But that’s a selection effect, not an effect of Medicaid.

What’s a researcher to do? Well, if one can observe (measure) the things that affect sorting and outcomes, one can control for them. There are well-established statistical ways of doing this (I won’t go into it, but Steve has). But here’s the kicker, there are some factors that affect sorting and outcomes that we cannot even observe in data. There are unmeasured aspects of health, skills attitudes, and culture, among other things, that relate to both sorting and outcomes. Unobservably sicker individuals may enroll in Medicaid. Or unobservable characteristics (quality) of providers that patients visit may be related to both Medicaid enrollment and health outcomes. This effect of unobservable factors on the outcome of interest is emphasized by the dashed lines in the figure.

Thus, even a study with quite sophisticated statistical controls for observed factors can reveal correlations that should not be causally interpreted due to unobservable selection bias. However, there is a remedy to this problem too. It is based on the fact that some institutional factors that affect sorting do not affect outcomes (no line from the institutional factors box to the outcome box in the figure). By teasing out the effect of such institutional factors on the sorting mechanism and using just that aspect of sorting to infer the relationship between treatment and outcomes, one obtains an estimate free of the confounding effects of observed and unobserved characteristics. This is the instrumental variables (IV) approach.

I’m obviously glossing over the technical nitty-gritty of how one actually implements an IV estimation strategy. One can find that in the literature. Such approaches have been applied to the very question used as an example above: what’s the effect of Medicaid on health? I’ve already posted about them, explained a little bit about why and how they work. After that review, I concluded that studies that do not address selection into Medicaid on unobservables (i.e. do not employ an IV or other quasi-randomized design) are likely biased. If such studies show that Medicaid is associated with worse health outcomes than being uninsured, it would be a mistake to interpret that as a causal effect of Medicaid on health.