New York tracks and reports the mortality rates of heart surgeons. And great news: Operative mortality is going down! But is this because surgeons are ‘cherry picking’ only the least sick patients?

New York tracks and reports the mortality rates of heart surgeons. And great news: Operative mortality is going down! But is this because surgeons are ‘cherry picking’ only the least sick patients?

The idea behind public reporting of mortality rates was to allow patients to select the best doctors and to pressure doctors to improve their practice. How well did it work?

From Robert Kolker:

At first glance, the system seems to have made an enormous difference. Although there’s no satisfying way to compare our risk-adjusted death rates with those of other states (most of which only have data from Medicare patients, who tend to be sicker and therefore skew the sample), the most recent data suggest that in the past fifteen years, New York’s coronary-bypass surgeons have improved their mortality rates to the point that they are, on average, just one-third of what they were.

That’s an amazingly good result. But what produced it? Surgery is almost certainly getting safer. However, a surgeon can also reduce her operative mortality rate by choosing not to operate on the patients who are sickest and least likely to survive.

[T]he statisticians who devised the report cards [on surgical outcomes] have been tormented by a persistent, intractable glitch in the system: It involves human beings. From the start, it was clear that surgeons’ careers were on the line as well as patients’ lives, and even before the first set of data was released, leaders in the heart-surgery community warned with an air of eerie certainty that the threat of public exposure would create a chilling effect—influencing surgeons to turn their backs on the sickest patients in order to prop up their personal success records.

Kolker provides several reasons to believe that New York surgeons are selectively avoiding the sickest patients.

Kolker’s reporting seems broadly consistent with research by Karen Joynt, Ashish K. Jha, and their colleagues about whether public reporting of the outcomes for percutaneous coronary intervention (PCI) for patients who had suffered heart attacks was associated with fewer PCIs being performed. They found that

[a]mong Medicare beneficiaries with acute [heart attacks], the use of PCI was lower for patients treated in 3 states with public reporting of PCI outcomes compared with patients treated in 7 regional control states without public reporting.

However, Shin-Yi Chou, Mary Deily, and Suhui Lu looked at the effect of online publishing of coronary artery bypass graft (CABG) outcomes in Pennsylvania, and found more encouraging results. They looked at how outcomes changed from before online reporting started compared with outcomes after online reporting, and how those changes varied across regions of Pennsylvania.

To understand Chou et al.’s study, recall that one of the goals of publishing information about outcomes is to encourage patients to choose providers based on the quality of care. If patients select providers based on better outcomes, we would expect providers to adapt by improving those outcomes. But this won’t work in markets dominated by a single provider, where patients have no choice. So Chou et al. hypothesized that in regions where there was more competition between health care systems, providers would compete with each other by working to improve outcomes. Therefore, they would expect greater improvements in outcomes in more competitive markets.

Chou et al. found

a very robust shift in CABG outcomes at the time of online publication [of outcomes data] in more competitive markets, suggesting that improved quality information caused hospitals in more competitive markets to use more resources to provide better health outcomes for Medicare patients. Specifically, in more competitive hospital markets the online publication of CABG report cards resulted in a roughly 5-10% reduction in mortality at an additional cost of approximately two thousand dollars per case.

Moreover, they found no evidence that the patients that received CABG surgery were systematically healthier after report cards went online, as would be the case if surgeons were selectively refusing to operate on severely ill patients. In summary, publishing outcomes in Pennsylvania appeared to result in better care and better outcomes for heart patients, including the severely ill.

Clearly, more data are needed. Chou et al.’s results are encouraging if you support publication of outcomes data, but their inferences are based on the results of intensive statistical modeling of observational data, rather than a designed experiment or an experiment of nature.

But for the sake of argument, let’s suppose it’s true that, as Kolker suggests, public reporting of outcomes leads surgeons to avoid operating on more severely ill patients. What should we think about this?

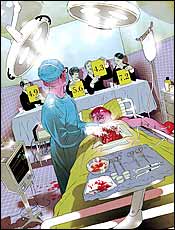

The image accompanying Kolker’s article (to the right) suggests that New York surgeons are functioning as a “death panel,” advancing their careers at the expense of the direly ill. However, to make the case that surgeons are killing patients, we need evidence that surgeons’ decisions are leading to a higher death rate among patients.

The image accompanying Kolker’s article (to the right) suggests that New York surgeons are functioning as a “death panel,” advancing their careers at the expense of the direly ill. However, to make the case that surgeons are killing patients, we need evidence that surgeons’ decisions are leading to a higher death rate among patients.

Joynt and her colleagues looked at that. If public reporting of outcomes leads surgeons to not operate on sicker patients, and if not getting surgery kills those patients, then we would expect higher mortality rates for heart patients in states with public reporting. But Joynt et al. found that

there was no difference in overall acute [heart attack] mortality between states with and without public reporting.

Again, more data are needed to confirm this. Nevertheless, the lack of a difference in mortality means that it’s at least possible that surgeons are accurately judging whether patients would benefit from surgery. That is, perhaps they are declining to operate on those patients for whom the operative risk and cumulative trauma of surgery are such that the patient would gain no additional life from the procedure.

My bottom line is that it is premature to conclude that surgeons are letting patients die to advance their careers. The question is nevertheless important and I urge you to read Kolker’s article. For more TIE posts on the complexities of using outcomes to improve quality, see here, here, and here. For more on the importance of competition among hospitals for improving health care quality, see here.

UPDATE: You’ll find a discussion of this post on Reddit.