Recently, Austin and I wrote about problems in the reporting of medical research. We asked,

How can we get serious about creating an open, valid, and reliable scientific literature?

Among the reforms we discussed was the

Registration of [clinical] trials and reporting of all registered analysis (or clear metrics of the extent to which they are not reported);

But how much difference does registration of clinical trials really make?

It makes a lot of difference, according to a study from Robert Kaplan and Veronica Irvin:

Methods

We identified all large NHLBI supported RCTs between 1970 and 2012 evaluating drugs or dietary supplements for the treatment or prevention of cardiovascular disease. Trials were included if direct costs >$500,000/year, participants were adult humans, and the primary outcome was cardiovascular risk, disease or death. The 55 trials meeting these criteria were coded for whether they were published prior to or after the year 2000, whether they registered in clinicaltrials.gov prior to publication, used active or placebo comparator, and whether or not the trial had industry co-sponsorship. We tabulated whether the study reported a positive, negative, or null result on the primary outcome variable and for total mortality.

The year 2000 is important because

[it] marks the beginning of a natural experiment. After the year 2000, all (100%) of large NHLBI were registered prospectively in ClinicalTrials.Gov prior to publication.

So how did the outcomes change in NHLBI clinical trials published during and after 2000?

Results

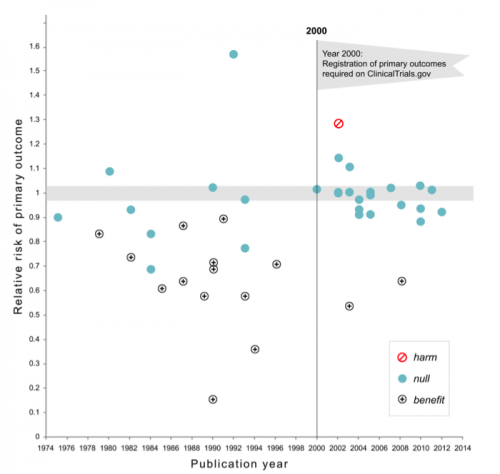

17 of 30 studies (57%) published prior to 2000 showed a significant benefit of intervention on the primary outcome in comparison to only 2 among the 25 (8%) trials published after 2000 (χ2=12.2,df= 1, p=0.0005). There has been no change in the proportion of trials that compared treatment to placebo versus active comparator. Industry co-sponsorship was unrelated to the probability of reporting a significant benefit. Pre-registration in clinical trials. gov was strongly associated with the trend toward null findings.

The magnitude of this difference is stunning. Here are the findings in a scatterplot. As you can see, the studies reporting benefit nearly vanish after the trial registration requirement took effect.

Why this change? Kaplan and Irvin discuss several factors that could have explained the results (the article is open access, so go read it). They acknowledge that the design of their study means they cannot claim that the registration requirement caused the dramatic increase in null findings.

Nevertheless, they have a favored explanation:

[The new rule meant that] investigators were required to prospectively declare their primary and secondary outcome variables. Prior to 2000, investigators had a greater opportunity to measure a range of variables and to select the most successful outcomes when reporting their results… Prospective declaration of the primary outcome variable is important because it eliminates the possibility of selecting for reporting an outcome among many different measures included in the study. In order to investigate this issue, we looked at the statistical significance of other variables not declared as the primary outcomes for preregistered studies. Among the 25 pre-registered trials published in 2000 or later, 12 reported significant, positive effects for cardiovascular-related variables other than the primary outcome. Importantly, almost half of the trials might have been able to report a positive result if they had not declared a primary outcome in advance. Had the prospective declaration of a primary outcome not have been required, it is possible that the number of positive studies post-2000 would have looked very similar to the pre-2000 period.

That is, when analysts measure multiple outcome variables, and then select which outcome to report after looking at the results, they are more likely to report a difference that is statistically significant by chance. This is one of the reasons why John Ioannidis famously asserted that “most published research findings are false.” The large drop in the proportion of significant trial results following the registration requirement strongly suggests that prior registration of analytical plans is an essential reform.

It is also profoundly disturbing that when the data are analyzed conservatively, very few cardiovascular treatment trials demonstrated benefit. Cardiovascular diseases are the leading global cause of death. Is there nothing left to discover here?

Maybe there isn’t, but there is another possibility, suggested to me by Margit Burmeister and David States. They suspect that there are often strong treatment-genome interactions for many medications. If so, the drug (and the dose of the drug) that works for you depends on your genetic profile. If so, large clinical trials in general populations using a standard drug and dosage are averaging results across people for whom the drug works and those for whom it doesn’t. Those trials are, therefore, built to fail, but a precision medicine approach may succeed. Let’s hope so.