I tried price shopping for health care. It isn’t worth it – not yet.

Last year, I joined an exclusive group – Americans who have price shopped for their health care. And it wasn’t great…

Last year, I joined an exclusive group – Americans who have price shopped for their health care. And it wasn’t great…

Last year, I joined an exclusive group – Americans who have price shopped for their health care. And it wasn’t great…

Dual-eligible patients face some of the largest gaps in SUD treatment. The problem isn’t a lack of solutions.

Gene therapies can cure once-incurable diseases, but without payment reform, America’s insurance system will keep them out of reach for many patients.

Six years, five doctors, a hard breakup, a soft landing.

A new FDA program promises ultra-fast drug approvals for “national priorities,” raising questions about politics, science, and public trust.

Five years after hospital price transparency, costs keep rising – showing that publishing prices alone can’t lower spending without enforcement and real competition.

Medicare’s hospital trust fund will be insolvent by 2033. Without change, older adults and hospitals face painful, automatic cuts to inpatient care.

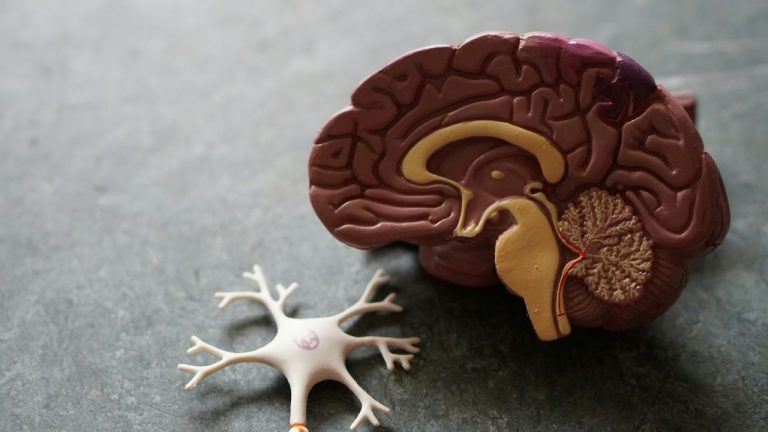

Massachusetts has a chance to lead on state policy around Alzheimer’s and dementia in 2026, if lawmakers choose to prioritize it.

Medicaid retroactive coverage changes shift costs to patients, but hospitals can cushion the impact using 340B funds

A more useful definition of dementia-friendliness must center on state policy.

Despite popular belief, drug price transparency does not guarantee affordable prices or fair access to medicines.

The public discourse about video games and health largely focuses on the potential risks, but what about the benefits? The answer may surprise you.

Trump’s drug pricing policy promises headlines but not savings – Americans still pay triple, while real reform remains out of reach.